General Information

GEOGALS is a seismic data processing company, which was incorporated in 2011 in Tallinn (Estonia).

As an independent service provider, GEOGALS use the modern processing software and powerful hardware

to process large volumes of 2D and 3D seismic data acquired in various geographic and geologic settings,

e.g. land, transitional and marine environments.

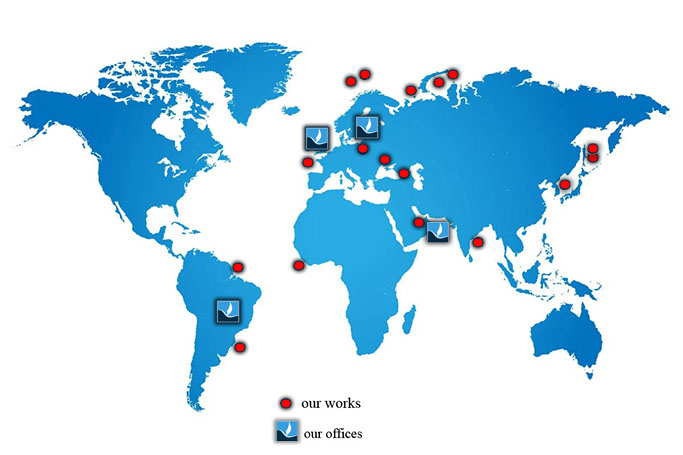

The company head office and its main Processing Center are located in Tallinn (Estonia). Regional offices are

located in London (UK), Manama (Bahrain) and Asuncion (Paraguay).

Information about software

The main software tool for marine data processing is the REVEAL package from Shearewater.

We also use software products from other developers (Paradigm, Flatirons, RadEx Pro) depending on client requirements

and complexity of geological setting.

Software packages:

Shearewater REVEAL (licensed)

Flatirons (licensed)

RadEx Pro (licensed)

Hardware Infrastructure Overview

The primary data center, located in Tallinn, provides a high-performance computing environment

comprising more than 4,800 CPU cores and over 1.5 PB of usable storage capacity.

The IT infrastructure is built on industry-standard, enterprise-grade hardware and software technologies, including:

• Networking: Arista 25G/100G Ethernet switching infrastructure and Mellanox FDR InfiniBand fabric

• Compute infrastructure: HPE BladeSystem c7000 (Gen8/Gen10) and HPE DL-series servers

• Operating systems: CentOS Linux 7.9 and Rocky Linux 8.10

• Workload management: Slurm workload manager

• Parallel filesystem: BeeGFS distributed filesystem

• Configuration management and orchestration: SaltStack and Ansible

About Tallinn Processing Center

Management Infrastructure

The management layer is implemented as a highly available virtualization cluster based on Proxmox VE 8.3 and includes:

• Three (3) HPE DL380 Gen10 servers, each equipped with 192 GB RAM

• Shared enterprise storage system: HPE MSA 2052

• High-performance switching: Arista DCS-7060SX2

CPU Cluster 1

• Four (4) HPE BladeSystem c7000 Gen3 chassis

• Each chassis contains sixteen (16) compute nodes

Node configuration:

• HPE BL460 Gen10

• Intel Xeon Gold 6240 processors (36 CPU cores per node)

• 384 GB DDR4 RAM

• 4.0 TB SSD storage

Total capacity: 64 nodes / 2,304 CPU cores

CPU Cluster 2

• Eight (8) HPE BladeSystem c7000 Gen2 chassis

• Each chassis contains sixteen (16) compute nodes

Node configuration:

• HPE BL460 Gen8

• 2 × Intel Xeon E5-2680 v2 processors (20 CPU cores per node)

• 256 GB DDR3 RAM

• 3.2 TB SSD storage

Total capacity: 128 nodes / 2,560 CPU cores

GPU Cluster

• Six (6) Supermicro SYS-1028GR-TR high-performance GPU servers

Node configuration:

• 2 × Intel Xeon E5-2697A v4 processors (30 CPU cores per node)

• 256 GB DDR4 RAM

• 2 × NVIDIA V100S GPUs

Aggregate GPU capacity:

• 61,440 CUDA cores

• 7,680 Tensor cores

Distributed Storage System (BeeGFS)

The storage subsystem is based on the BeeGFS parallel filesystem and includes:

• Eight (8) storage nodes with a total of fourteen (14) storage targets

• Dedicated metadata servers with NVMe-backed storage

• Centralized management server

• High-speed FDR InfiniBand storage network

Archival Storage

Long-term data retention is supported by a hybrid archival storage solution consisting of:

• NetApp FAS2620 system with DS460C disk shelf (total capacity: 344 TB)

• IBM 3592 tape library with support for E05, E07, E08, and LTO-6 formats

Availability and Reliability

The infrastructure is designed to ensure a high level of availability, fault tolerance, and service continuity.

Key availability features include:

• Redundant architecture: Critical components, including compute nodes, storage systems, and network infrastructure, are deployed with redundancy to eliminate single points of failure.

• High-availability virtualization layer: The Proxmox VE cluster supports live migration and automatic restart of virtual machines in case of hardware failure.

• Resilient storage subsystem: The BeeGFS distributed filesystem ensures data replication and fault tolerance across multiple storage nodes.

• High-speed networking: The use of redundant Arista switching and InfiniBand fabric minimizes network bottlenecks and ensures low-latency communication.

• Monitoring and alerting: Continuous infrastructure monitoring enables proactive detection and resolution of potential issues.

• Planned maintenance procedures: Maintenance activities are scheduled to minimize service disruption and are communicated in advance.

Security

The infrastructure is designed in accordance with industry best practices to ensure the confidentiality, integrity, and availability of data and services.

Key security measures include:

• Network security: Segmentation of management, storage, and compute networks; controlled access via firewalls and ACLs.

• Access control: Role-based access control (RBAC) is implemented across all systems. Administrative access is restricted and granted based on the principle of least privilege.

• Authentication and authorization: Centralized authentication mechanisms (FreeIPA integration) are used where applicable.

• Data protection: Data is protected through access policies, filesystem-level controls, and secure storage configurations.

• Secure administration: All administrative access is performed over secure protocols (e.g., SSH, HTTPS).

• Patch and vulnerability management: Regular updates and security patching are applied to operating systems and software components.

• Audit and logging: System activity and access logs are collected and retained for auditing and incident investigation purposes.

• Backup and recovery: Regular backups and archival procedures ensure data recoverability in case of failure or data loss incidents.

Datacenter

The data center of Levira in Tallinn is a highly reliable and secure infrastructure facility, compliant with

international standards for quality and information security, including ISO 27001, ISO 9001, and ISO 14001,

as well as national ISKE requirements.

The physical and engineering infrastructure of the data center is designed in accordance with high availability

and business continuity principles. Full redundancy is implemented across all critical systems, including power

supply (multiple independent feeds, UPS, and diesel generators), cooling systems, and network infrastructure.

The facility's architecture ensures fault isolation and resilience against external disruptions.

The data center demonstrates a high level of operational reliability and is designed to support strict SLA

commitments, backed by extensive experience in delivering mission-critical services for the telecommunications

and media industries.

The network infrastructure follows a carrier-neutral model, with connectivity to multiple independent providers

and diverse fiber routes, ensuring high availability, fault tolerance, and consistently low-latency connectivity

across the Northern European region.

More detailed information is available on the official website:

www.levira.com

GEOGALS

Staff Information

GEOGALS employs 15-20 specialists – geophysicists, geologists and IT staff with profound professional record. Combined expertise of our personnel and tens of years of experience in a wide range of petroleum provinces allow us to carry out projects of any complexity and size. We are experts in using cutting-edge procedures, such as 3D SRME, WEMA, 5D regularization, RTM, FWI, Q-PSDM and ES360.